Lens, one of three visual discovery features introduced by Pinterest last month, rolled out to all of the social network’s U.S. iPhone and Android users, with some new tweaks, although the feature is still in beta.

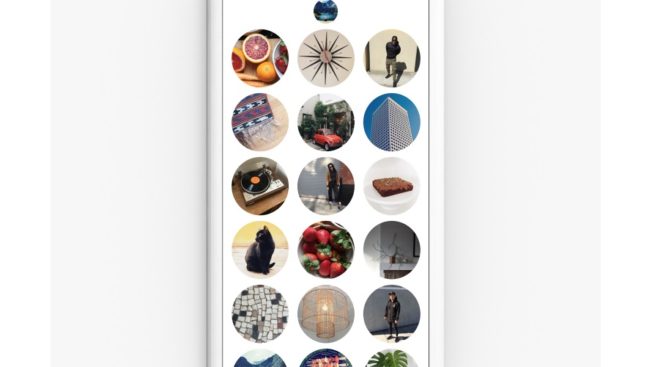

Engineering manager, visual search and discovery engineering Steven Ramkumar said in a blog post that Lens now allows users to tap the Lens icon and swipe up in order to access new idea Lenses (pictured above) to try out.

Pinterest users can also now tag objects that they take photos of, which will enhance the data being used to populate Lens.

Users should update their applications to version 6.20

WORK SMARTER - LEARN, GROW AND BE INSPIRED.

Subscribe today!

To Read the Full Story Become an Adweek+ Subscriber

Already a member? Sign in